Hello all!

So a while ago we started talking about this

userops thing.

Basically, the idea is "deployment for the people", focusing on user

computing / networking freedom (in contrast to "devops", benefits to

large institutions are sure to come as a side effect, but are not the

primary focus. There's kind of a loose community surrounding the term

now, and a number of us are working towards solutions. But I think

something that has been missing for me at least is something to test

against. Everyone in the group wants to make deployment easiser. But

what does that mean?

This is an attempt to sketch out requirements. Keep in mind that I'm

writing out this draft on my own, so it might be that I'm missing some

things. And of course, some of this can be interpreted in multiple

ways. But it seems to me that if we want to make running servers

something for mere mortals to do for themselves, their friends, and

their families, these are some of the things that are needed:

-

Free as in Freedom:

I think this one's a given. If your solution isn't free and

open source software, there's no way it can deliver proper

network freedoms. I feel like this goes without saying, but

it's not considered a requirement in the "devops" world... but

the focus is different there. We're aiming to liberate users,

so your software solution should of course itself start with a

foundation of freedom.

Reproducible:

It's important to users that they be able to have the same

system produced over and over again. This is important for

experimenting with a setup before deployment, for ensuring that

issues are reproducible and friends and communities helping each

other debug problems when they run into them. It's also

important for security; you should be able to be sure that the

system you have described is the system you are really running,

and that if someone has compromised your system, that you are

able to rebuild it. And you shouldn't be relying on someone to

build a binary version of your system for you, unless there's a

way to rebuild that binary version yourself and you have a way

to be sure that this binary version corresponds to the system's

description and source. (Use the source, Luke!)

Nonetheless, I've noticed that when people talk about

reproducibility, they sometimes are talking about two distinct

but highly related things.

-

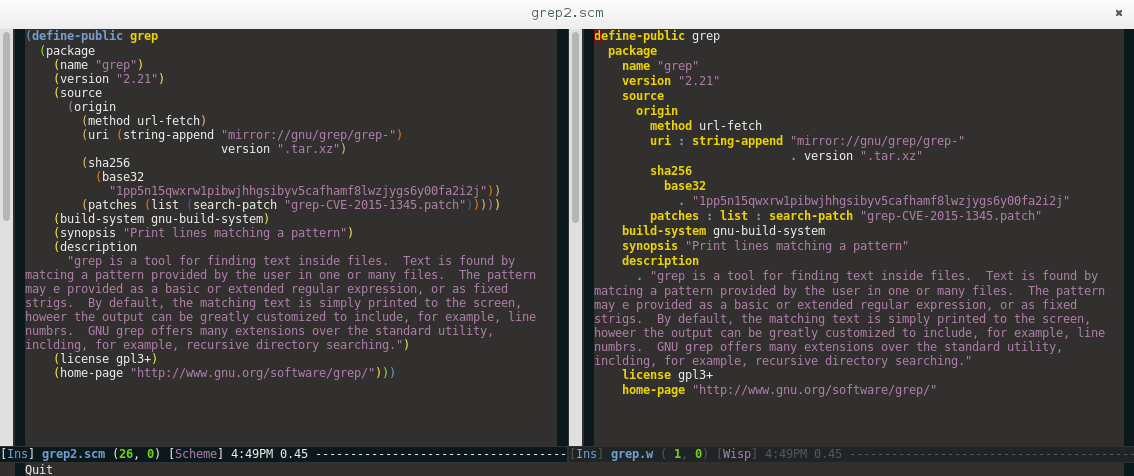

Reproducible packages:

The ability to compile from source any given package in a

distribution, and to have clear methods and procedures to do

so. While has been a given in the free software world for a

long time, there's been a trend in the devops-type world

towards a determination that packaging and deployment in

modern languages has gotten too complex, so simply rely on

some binary deployment. For reasons described above and

more, you should be able to rebuild your packages... *and*

all of your packages' dependencies... and their dependencies

too. If you can't do this, it's not really reproducible.

An even better goal is to guarantee not only that packages

can be built, but that they are byte-for-byte identical to

each other when built upon all their previous dependencies

on the same architecture. The

Debian Reproducibility

Project is a clear example of this principle in action.

Reproducible systems:

Take the package reproducibility description above, and

apply it to a whole system. It should be possible to, one

way or another, either describe or keep record of (or even

better, both) the system that is to be built, and rebuild it

again. Given selected packages, configuration files, and

anything else that is not "user data" (which is addressed in

the next section), it should be possible to have the very

same system that existed previously.

As with many things on this list, this is somewhat of a

gradient. But one extrapoliation, if taken far enough, I

believe is a useful one (and ties in with the "recoverable

sytem" part): systems should not be necessarily dependent

upon the date and time they are deployed. That is to say,

if I deployed a system yesterday, I should be able to

redeploy that same system today on an entirely new system

using all the packages that were installed yesterday, even

if my distribution now has newer, updated packages. It

should be possible for a system to be reproducible towards

any state, no matter what fixed point in time we were

originally referring to.

Recoverable:

Few things are more stressful than having a computer that works,

is doing something important for you, and then something

happens... and suddenly it doesn't, and you can't get back to

the point where your computer was working anymore. Maybe you

even lost important data!

If something goes wrong, it should be possible to set things

right again. A good userops system should do this. There are

two domains to which this applies:

-

Recoverable data:

In other words, backups. Anything that's special, mutable

data that the user wants to keep fits in this territory. As

much as possible, a userops system should seek to make

running backups easy. Identifying based on system

configuration which files to copy and helping to provide

this information to a backup system, or simply only leaving

all mutable user data in an easy-to-back-up location would

help users from having to determine what to back up on their

own, which can be easily overwhelming and error-prone for an

individual.

Some data (such as data in many SQL databases) is a bit more

complex than just copying over files. For something like

this, it would be best if a system could help with setting

up this data to be moved to a more appropriate backup

serialization.

Recoverable system:

Linking somewhat to the "reproducible system" concept, a

user should be able to upgrade without fear of becoming

stuck. Upgrade paralysis is something I know I and many

others have experienced. Sometimes it even appears that an

upgrade will go totally fine, and you may have tested

carefully to make sure it will, but you do an upgrade, and

suddenly things are broken. The state of the system has

moved to a point where you can't get back! This is a

problem.

If a user experiences a problem in upgrading their system

software and configuration, they should have a good means of

rolling back. I believe this will remove much of the

anxiety out of server administration especially for smaller

scale deployments... I know it would for me.

Friendly GUI

It should be possible to install the system via a friendly GUI.

This probably should be optional; there may be lower level

interfaces to the deployment system that some users would prefer

to use. But many things probably can be done from a GUI, and

thus should be able to be.

Many aspects of configuring a system require filling in shared

data between components; a system should generally follow a

Don't Repeat Yourself type philosophy. A web application may

require the configuration details of a mail transfer agent, and

the web application may also need to provide its own details to

a web server such as Nginx or Apache. Users should have to fill

in these details in one place each, and they should propagate

configuration to the other components of the system.

Scriptable

Not everyone should have to work with this layer directly, but

everyone benefits from scriptability. Having your system be

scriptable means that users can properly build interfaces on top

of your system and additional components that extend it beyond

directions you may be able to do directly. For example, you

might not have to build a web interface yourself; if your system

exposes its internals in a language capable enough of building

web applications, someone else can do that for you. Similarly

with provisioning, etc.

Working with the previous section, bonus points if the GUI can

"guide users" into learning how to work with more lower level

components; the Blender UI is a good example of this, with most

users being artists who are not programmers, but hovering over

user interface elements exposes their Python equivalents, and so

many artists do not start out as developers, but become so in

working to extend the program for their needs bit by bit.

(Emacs has similar behavior, but is already built for

developers, so is not as good of an example.)

"Self Extensibility"

is another way to look at this.

Collaboration friendly:

Though many individuals will be deploying on their own, many

server deployments are set up to serve a community. It should

be possible for users to help each other collaborate on

deployment. This may mean a variety of things, from being able

to collaborate on configuration, to having an easy means to

reproduce a system locally.

Additionally, many deployments share steps. Users should be

able to help each other out and share "recipes" of deployment

steps. The most minimalist (and least useful) version of this

is something akin to snippet sharing on a wiki. Most likely,

wikis already exist, so more interestingly, it should be

possible to share deployment strategies via code that is

proceduralized in some form. As such, in an ideal form,

deployment recipes should be made available similar to how

packages are in present distributions, with the appropriate

slots left open for customization for a particular deployment.

Fleet manageable:

Many users have not one but many computers to take care of these

days. Keeping so many systems up to date can be very hard;

being able to do so for many systems at once (especially if your

system allows them to share configuration components) can help

a user actually keep on track of things and lead to less

neglected systems.

There may be different sets, or "fleets", of computers to take

care of... you may find that a user discovers that she needs to

both take care of a set of computers for her (and maybe her

loved ones') personal use, but she also has servers to take care

of for a hobby project, and another set of servers for work.

Not all users require this, and perhaps this can be provided on

another layer via some other scripting. But enough users are in

"maintainance overload" of keeping track of too many computers

that this should probably be provided.

Secure

One of the most important and yet open ended requirements,

proper security is critical. Security decisions usually involve

tradeoffs, so what security decisions are made is left somewhat

open ended, but there should be a focus of security within your

system. Most importantly, good security hygeine should be made

easy for your users, ideally as easy or easier than not

following good hygeiene.

Particular areas of interest include: encrypted communication,

preferring or enforcing key based authentication over passwords,

isolating and sandboxing applications.

To my knowledge, at this time no system provides all the features

above in a way that is usable for many everyday users. (I've also

left some ambiguity in how to achieve these properties above,

so in a sense, this is not a pass/fail type test, but rather a set

of properties to measure a system against.) In an ideal future,

more Userops type systems will provide the above properties, and

ideally not all users will have to think too much about their

benefits. (Though it's always great to give the opportunity to

users who are interested in thinking about these things!) In the

meanwhile, I hope this document will provide a useful basis for

implementing and thinking about mapping one's implementation

against!